Published: April 14, 2026

Before generative AI, testing AI was similar to other forms of software testing. When you use the same code, data, and settings, you should get the same result. Your tests are reproducible and the algorithms are deterministic. Your software is predictable, which shapes your users' trust.

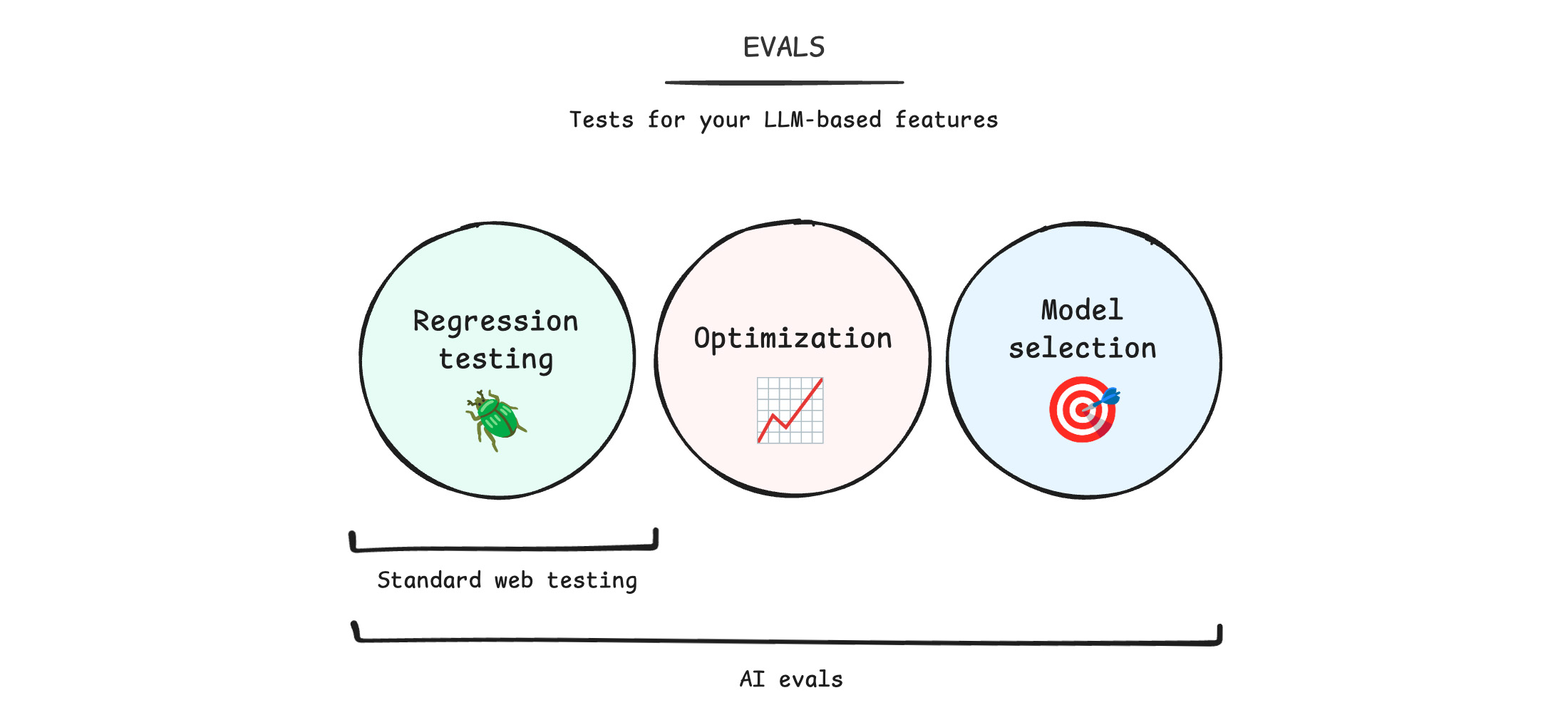

With generative AI, quality becomes subjective rather than objective. Testing is crucial. When you test, your team can ship your features to your users with confidence. With AI evaluations (or evals for short), you use new workflows to test your applications.

Over the next several weeks, we'll release lessons on AI evals. We start with the basics: everything you need to set up your first AI testing pipeline. Then, we'll share more advanced techniques, so you can continue to iterate and improve your evals.

These are novel techniques in a rapidly changing landscape of AI. While you can expect the exact tools to shift, these best practices are built to last.

The first four modules will be live on April 16, 2026.

Engage and share feedback

Excited about this course? Have questions or topics you want to see? We'd love to hear it. Schedule a meeting with us, message us on social: BlueSky, LinkedIn, or X.

Join the Early Preview Program for an early look at new AI APIs and access to our mailing list.