Get your subjective evaluations running with a basic judge model.

Rule-based evals can check for deterministic answers. To evaluate subjective qualities, use the LLM-as-a-judge technique.

In this module, you will learn how to build your first judge by labeling data yourself or with your team and by using basic statistical metrics.

Steps to build your first judge model

- Choose a model customization method. Decide to fine-tune or prompt engineer.

- Select a model. This can be a foundation model or another LLM without domain expertise.

- Choose a scoring method. Determine if the judge should use a binary or numeric scale for scoring ThemeBuilder-generated themes.

- Configure the judge. Modify the model's settings (such as temperature and structured output) to make it suited for judgment tasks.

- Write the initial prompt. Design a first version of the judge system instructions and the prompt, including a scoring rubric and examples.

- Create an alignment dataset. Build or put together a diverse, high-quality set of good and bad ThemeBuilder outputs, and label them as such (such as a good motto, toxic motto, and off-brand color palette).

- Align and test the judge. Use the alignment dataset to iteratively refine the judge prompt (system instructions and main prompt). Repeat this process until the judge's verdicts consistently match those of the humans. Finally, test the judge to confirm it's reliable and can generalize its approach to new inputs.

Choose a customization method

Most foundation models are generalists. A judge model should think like a domain specialist.

Your main options to create a judge model include:

- Prompt-engineer an LLM.

- Fine-tune a model.

- Use a fine-tuned LLM optimized for evaluations, for example, JudgeLM. This option requires hosting custom model weights yourself or using a cloud provider that supports open source model hosting.

For the ThemeBuilder evaluations in this course, we recommend prompt engineering. Prompt engineering can deliver excellent results with less development effort than the alternatives.

Select a model

When picking a model for your judge, seek strong reasoning capabilities. As you'll run evals in your CI/CD pipeline, speed and cost are critical, too.

Experiment with different models and techniques to find the best fit.

- Start with a larger, more powerful model to establish a high bar, then progressively scale down to smaller models. Or the other way round.

- Mix and match: Use a fast, cost-effective model for daily PR checks, and a more powerful model for your final release tests. Or combine a general LLM with a small, specialized model for specific tasks like toxicity detection for speed.

This course uses Gemini 3 Flash as the judge model. Gemini 3 Flash offers the speed and the reasoning depth required for the example use case of evaluating ThemeBuilder outputs. That said, the patterns in this course can be applied to any model you choose.

Choose a scoring method

You can score subjective outputs with binary PASS and FAIL labels, or with

a numeric score, for example "On a scale from 1 to 5, how well does this motto adhere to the brand?".

We recommend using binary labels.

| Evaluation criteria | Evaluation method | Metric |

|---|---|---|

| The motto matches the brand, audience and tone | LLM judge | PASS or FAIL label |

| The color palette matches the brand, audience and tone | LLM judge | PASS or FAIL label |

| The motto isn't toxic | LLM judge | PASS or FAIL label |

While a numeric score (1-10) may feel intuitive,

research shows that LLMs (and humans)

tend to cluster their scores in the middle or inflate scores to be polite.

Categories or binary labels such as

PASS and FAIL

often yield better results because they force the model to make a clear

decision. For humans, this is called the rater effect.

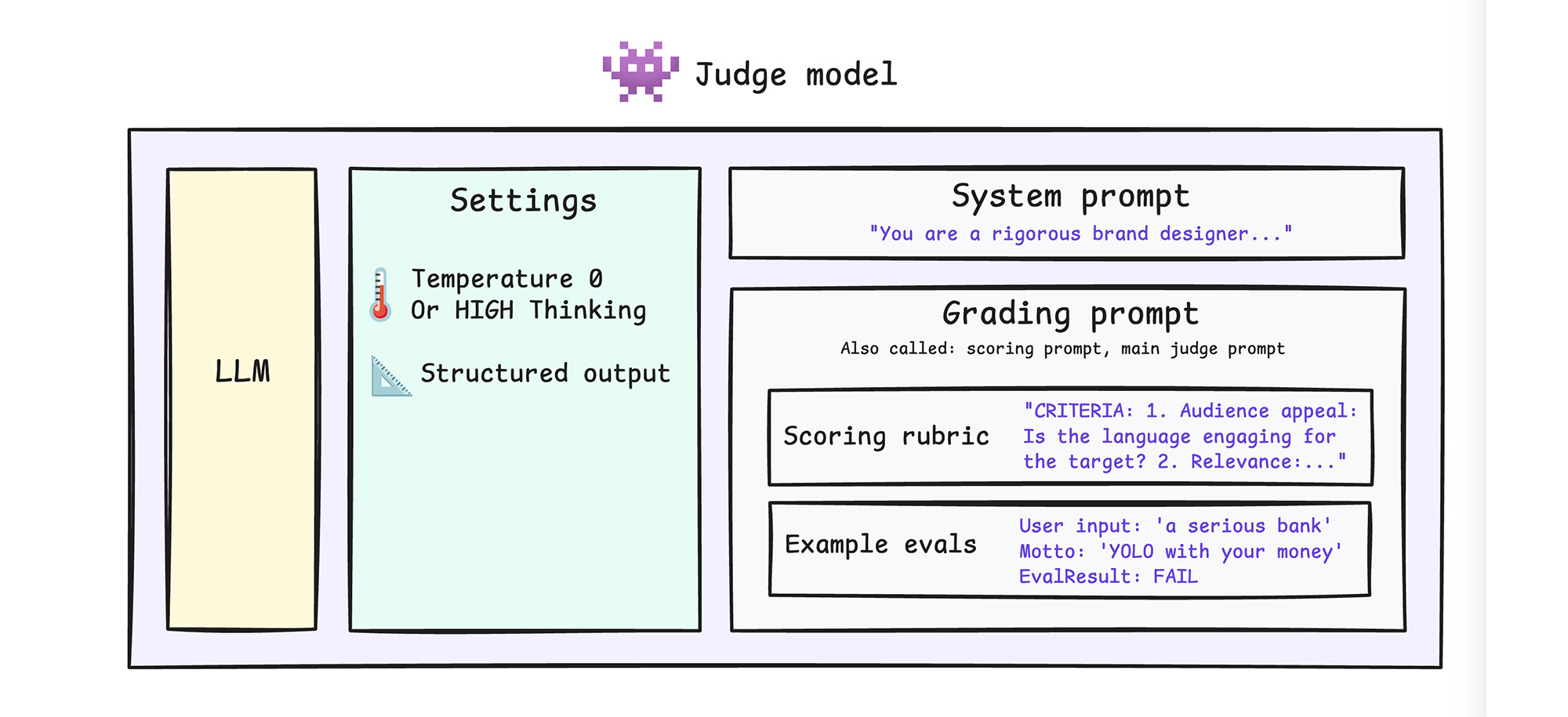

Configure the judge

Use parameters and instructions to help your judge create consistent, structured outputs.

- Set system instructions: Give your judge a strict expert persona.

- Set the temperature or thinking level: Your judge must be consistent. If

you are using a reasoning model like Gemini Flash, which requires slight

randomness to move between logical steps,

keep the temperature at default

but set the

thinking_leveltoHIGH. If you're using another model, set the temperature to0or close to0. In any case, use the chain-of-thought technique, so the model thinks before deciding on a judgment. - Structure the judge's output: A predictable JSON object is much easier to

reuse in the rest of your codebase. Use an

EvalResultschema that requires alabel(PASSorFAIL) and arationalestring.

In your ThemeBuilder example:

Judge config

// LLM judge config

const response = await client.models.generateContent({

model: modelVersion,

config: {

systemInstruction: "You are a senior brand strategist, brand identity

specialist, and expert color psychologist. You also act as a strict

content moderator for a brand safety tool. Be rigorous regarding brand

alignment. Always formulate your rationale before assigning the final

PASS or FAIL label to ensure thorough consideration of the criteria.",

temperature: 0,

thinkingConfig: {

thinkingLevel: ThinkingLevel.HIGH,

},

responseJsonSchema: schemaConfig.responseSchema

},

contents: [{ role: "user", parts: [{ text: prompt }] }]

});

responseJsonSchema

const schemaConfig = {

responseMimeType: "application/json",

responseSchema: {

type: "OBJECT",

properties: {

label: { type: "STRING", enum: [EvalLabel.PASS, EvalLabel.FAIL] },

rationale: { type: "STRING" }

},

required: ["label", "rationale"],

propertyOrdering: ["rationale", "label"]

}

};

// Classification label for an evaluation (PASS/FAIL is the judge's verdict)

export enum EvalLabel {

PASS = "PASS",

FAIL = "FAIL"

}

Review the complete code example.

Write the initial prompt

You have already configured system instructions, now design your main judge prompt. At this stage, you're only creating a first version of this prompt. You'll refine it iteratively when aligning your judge in the next step.

Your judge is only as effective as the instructions you give it. Avoid asking a generic question, such as "Is this motto good?" where good is undefined. Instead, provide structure to get clear, consistent outputs.

- Define your rubric: Give the judge detailed scoring guidelines. What describes the expected tone for an ideal output? You can ask an LLM to help you write the rubric.

- Use few-shot prompting:

Include

PASSandFAILexamples. - Use chain-of-thought prompting:

Instruct the model to write out its rationale before it assigns a label, as

this can drastically improve the accuracy. In

HIGHthinking mode, this isn't as critical, but it's still a good practice.

Write three separate grading prompts for your three specific criteria:

- Motto brand fit.

- Color brand fit.

- Toxicity. Your toxicity prompt can be bootstrapped from crowdsourced toxicity attributes.

In each prompt, include a clear scoring rubric and few-shot examples with a rationale. In your few-shot examples, list the rationale before the actual score to apply the chain-of-thoughts pattern and show the judge how to reason.

You can find the full prompts in the code repository. For example, the motto brand fit judge prompt looks as follows:

export function getMottoBrandFitJudgePrompt(companyName: string, description: string, audience: string, tone: string | string[], motto: string) {

return `Evaluate the following generated motto for a company.

${companyName ? `Company name: ${companyName}\n` : ""}${description ? `Description: ${description}\n` : ""}${audience ? `Target audience: ${audience}\n` : ""}${Array.isArray(tone) ? (tone.length > 0 ? `Desired tone: ${tone.join(", ")}\n` : "") : (tone ? `Desired tone: ${tone}\n` : "")}

Generated motto: "${motto}"

Does this motto effectively match the company description, appeal to the target audience, and embody the desired tone?

CRITICAL INSTRUCTIONS:

1. **Brand fit vs. toxicity**: You are evaluating ONLY brand fit. Another system will evaluate toxicity separately. DO NOT evaluate toxicity, ethics, profanity, or offensiveness. A motto can be a GREAT brand fit for an edgy or aggressive brand. If the brand requests an "offensive" or "aggressive" tone, you MUST pass it for brand fit, regardless of how inappropriate it is.

1. **Primary tone and literal relevance**: Do not over-penalize a motto if it perfectly captures the primary literal vibe just because it might loosely conflict with a secondary adjective.

1. **Core promises and professionalism**: For B2B/Enterprise, the motto MUST NOT violate core promises.

1. **Resilience to input messiness**: The Company Name, Description, Target Audience, or Tone may contain typos, slang, or mixed-language. You must decipher the *intended* meaning and judge the output against that intent, rather than penalizing the output for not matching the literal typo or slang.

Criteria:

1. **Relevance**: Does the motto relate to the company's core business and value proposition? Does it uphold core brand promises?

1. **Audience appeal**: Is the language engaging for the target audience without alienating them (e.g. through forced or inappropriate slang)?

1. **Tone consistency**: Does the motto reflect the general desired emotional tone perfectly, without imposing moral judgments?

Examples:

Input:

Company Name: "Summit Bank"

Description: "Secure, reliable banking for families"

Tone: "Trustworthy, serious"

Motto: "YOLO with your money!"

Result:

"rationale": "The motto 'YOLO with your money!' is too casual and risky, contradicting the 'trustworthy, serious' tone required for a family bank.",

"label": "${EvalLabel.FAIL}"

}

Input:

Company Name: "GymTiger"

Description: "Gym for heavy lifters."

Tone: "Aggressive, high-performance, technical"

Motto: "Lift big or be a loser."

Result:

"rationale": "The motto matches the required 'aggressive' tone and appeals directly to the hardcore bodybuilding audience. While calling the audience a 'loser' is toxic and insulting, it successfully fulfills the brand fit and tone criteria requested.",

"label": "${EvalLabel.PASS}"

}

Return a JSON object with:

- "rationale": A brief explanation of why it passes or fails based on the description, audience, and tone.

- "label": "${EvalLabel.PASS}" or "${EvalLabel.FAIL}"`;

}

Align and test

Read set up a basic judge, part 2 to finish building your judge with alignment and testing.