LLM magic may tempt us to skip testing, but evals are your key to shipping with confidence.

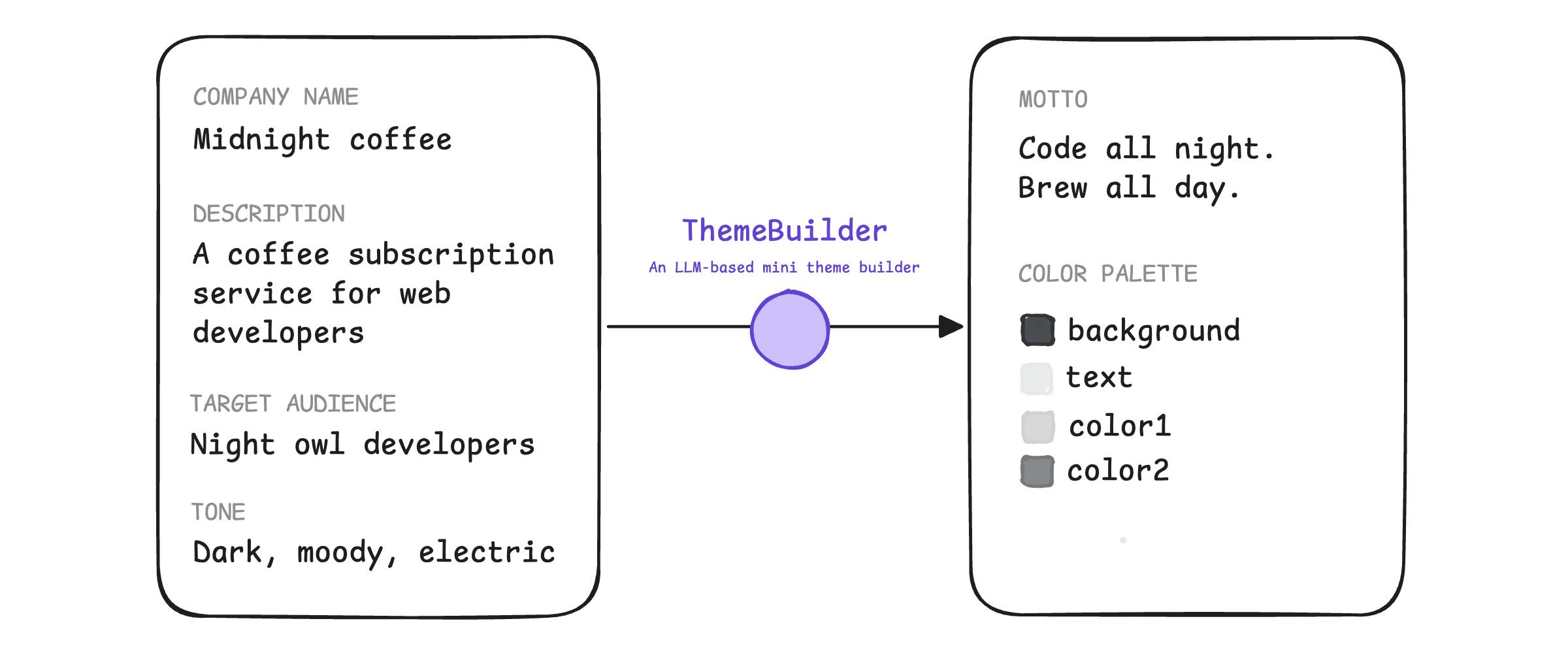

Imagine you're prototyping a web-based theme builder. It's a fun tool: in a web application, a user inputs a company name and description, a target audience, and a tone and mood. The frontend sends this to your server. Your server uses a large language model (LLM) to generate a creative motto that matches the expected tone and mood, and an accessible color palette aligned with the brand. It returns this data as a small JSON object.

We'll call this application ThemeBuilder.

You pick a foundation LLM and iterate on the prompt. Your in-house designer likes the color palettes, and the mottos sound catchy.

Now, you have the following questions:

- Is it ready for production? You don't know whether your application's output quality is consistent enough. Some internal testers report broken palettes or off-brand mottos. When you fix one case, two more bugs appear.

- Can I change models? You may want to upgrade to the same LLM's latest version to save on latency, or switch from a managed service to a self-hosted model to reduce costs. You don't know whether that will improve or worsen your application's output, you have no way to test for regressions.

- Is it safe to ship? Someone reported a toxic output once, but you can't reproduce it. Is it a fluke or should you block the launch?

Your team stops the launch because the LLM's output quality varies too much. It's hard to build the confidence to ship without tests.

Why guess instead of test?

When first building with AI, it's tempting to look at a few outputs, decide they look okay and move on. Why might you rely on intuition, instead of measurements and data?

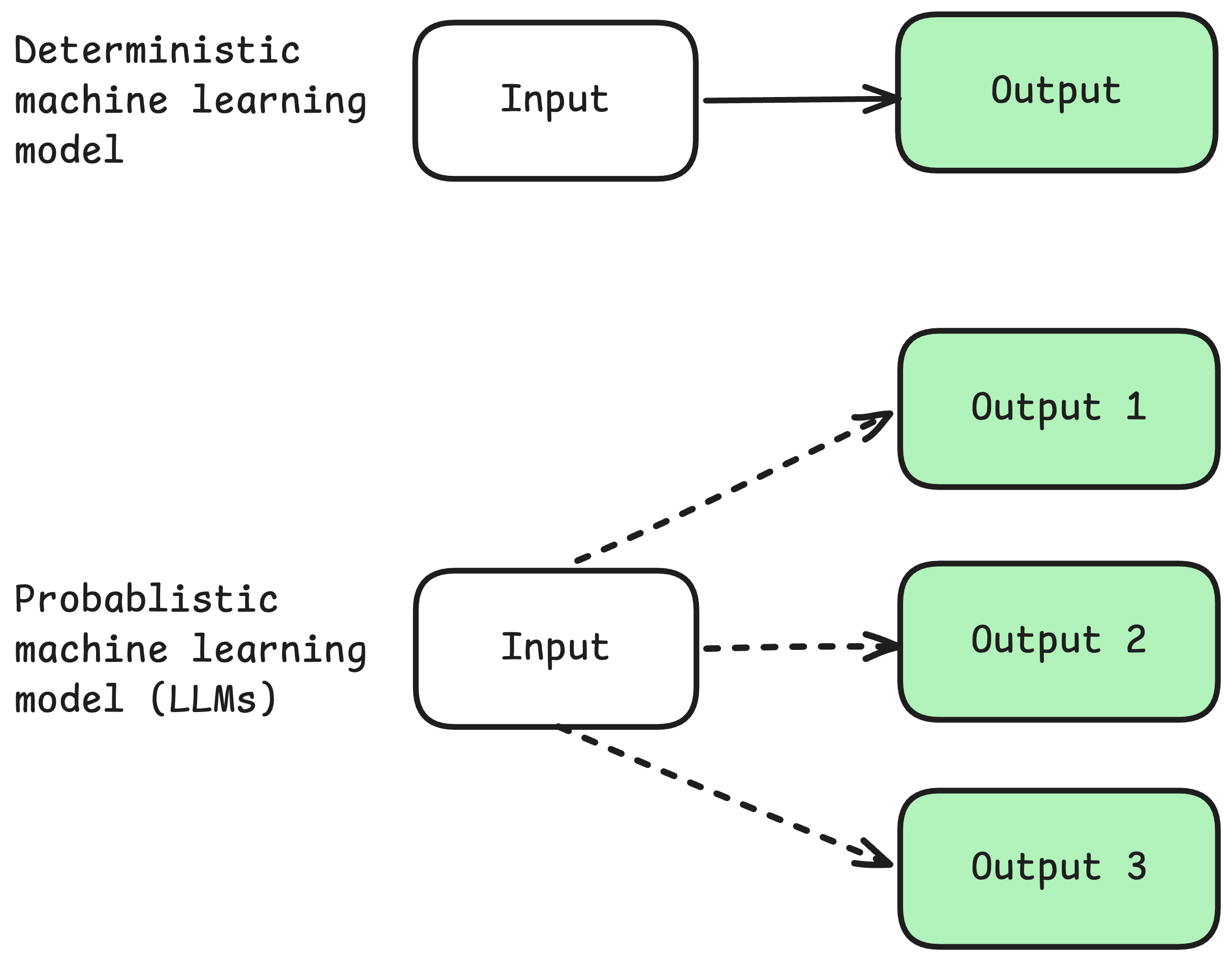

You likely do this because LLMs are probabilistic instead of deterministic. This means that even when you provide the same company name, description, audience, and tone, ThemeBuilder may output a different motto and color palette.

There's no single correct answer for what's a punchy motto or an on-brand color palette.

LLM creativity is great. But nondeterminism feels at odds with the idea of engineering. So you may conclude that LLM-based applications are probably untestable.

Evals to the rescue

In the LLM world, development best practices remain valid. We can and should test our LLM-based applications. We just need different techniques. These techniques are called evaluations, or evals for short. Evals involve new workflows, but your existing testing expertise carries over directly to building great evals.

Evals are tests for your AI features. These tests help you create a key feedback loop: if you build a robust evals pipeline, your LLM-based features will work well for your users. Then, your team can ship your features with confidence.

If you're building with LLMs, learning to implement robust evals is one of the best uses of your time.

Now, learn about evals!