What stays, what goes: mapping your web testing knowledge to the new world of LLMs.

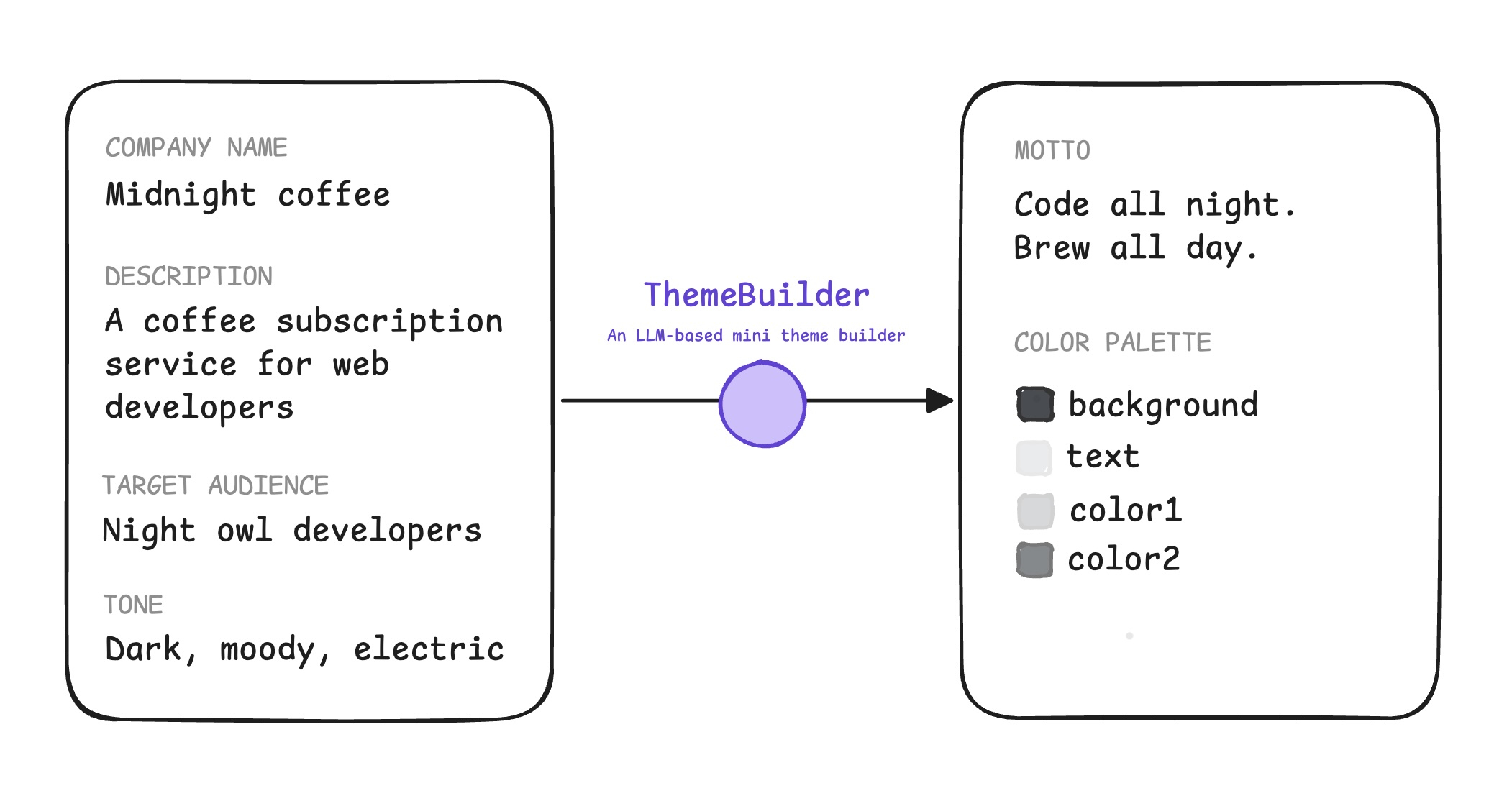

Example application

ThemeBuilder is your example application throughout this series. ThemeBuilder outputs a JSON object containing an LLM-generated motto and a color palette.

- The motto and palette must match the input brand name, description, audience, and tone.

- The motto shouldn't be toxic and must be short (under 6 words).

- The color palette contrast must be accessible, as defined by the WCAG minimal guidelines, with a 4.5:1 contrast ratio.

Objective and subjective evals

How do you test that ThemeBuilder works as intended?

Rule-based-evals (sometimes called exact evals) are objective tests with a binary answer of correct or incorrect. These are best for questions about data format, contrast ratio, or others with a definitive answer. You can implement these tests with regular, programmatic code.

Some checks are objective, with a binary answer of correct or incorrect. These are best for questions about data format, contrast ratio, or others with a definitive answer. You can implement these tests with regular, programmatic code. These are called rule-based-evals or exact evals.

For example:

// Example rule-based eval: data format

function evaluateFormat(appOutput) {

// Check if JSON is valid, colors are hex, no empty strings, motto is 6 words or fewer

// Use deterministic tools like zod for schema validation

return "PASS"; // or "FAIL"

}

Other checks are subjective, such as the brand and audience alignment for the motto and color palette. Even though toxicity detection is a classification task, it's also subjective because it involves judgment.

While subjective tests also involve classification, the range of what's correct and incorrect can vary greatly. For example, evaluating brand and audience alignment for the motto and color palette. Toxicity detection is subjective, too.

While evaluating subjective qualities may sound like something only a human expert can do, you can automate these tests at scale with the LLM-as-a-judge technique.

[LLM judges] are fast, easy to use, and relatively cheap [...] It has become one of the most, if not the most, common methods for evaluating AI models in production.

—AI Engineering, Chip Huyen

For example:

// Example LLM-as-a-judge eval for a subjective quality like brand fit

async function evalBrandFit(userInput, appOutput) {

const brandPrompt = `You are an expert brand strategist. Evaluate the

following generated motto for the company whose target audience is

${userInput.audience}, and who describes itself as

${userInput.companyDescription}: ${appOutput.motto}`

// Call the LLM judge

const evalResult = evalWithLLM(brandPrompt);

// Return the consolidated results

return {

mottoBrandFit: evalResult,

};

}

// Helper that communicates with the LLM API

async function evalWithLLM(prompt) {

// ... Call LLM with the prompt ...

// ... Parse the resulting judgement ("PASS" or "FAIL") + rationale

return {

status: "PASS",

rationale: "This motto perfectly captures the brand and tone, because..."

};

}

The model imitates human judgement, so you need a way to tell the judge exactly what you're looking for. You can do that by offering the judge a rubric.

A rubric is a structured set of criteria or scoring guidelines that a judge (human or AI) uses to evaluate an output. It provides a consistent framework to assess about subjective qualities across every evaluation.

Other types of evals

You may want to use reference-based or pairwise evals.

Reference-based

These measure similarity to a ground truth answer. Use these for tasks like translation or technical facts where a known good response exists.

Pairwise

A judge might give a PASS score to two different versions, even when one is

better than the other. Pairwise evaluation solves this by giving the judge two

outputs (A and B) for the same input and instructing the judge to pick a winner.

For example, imagine you're evaluating a motto for a friendly cafe:

Input: "Friendly cafe"

Pointwise evaluation:

Output A: "Come get coffee." // PASS

Output B: "Your morning smile in a cup." // PASS

2 PASS. Unconclusive!

Pairwise evaluation:

Output B wins. It captures the "friendly" tone more effectively than the generic Output A.

Use pairwise evaluation to select which version of your model you're going to deploy, or to compare two different prompts.

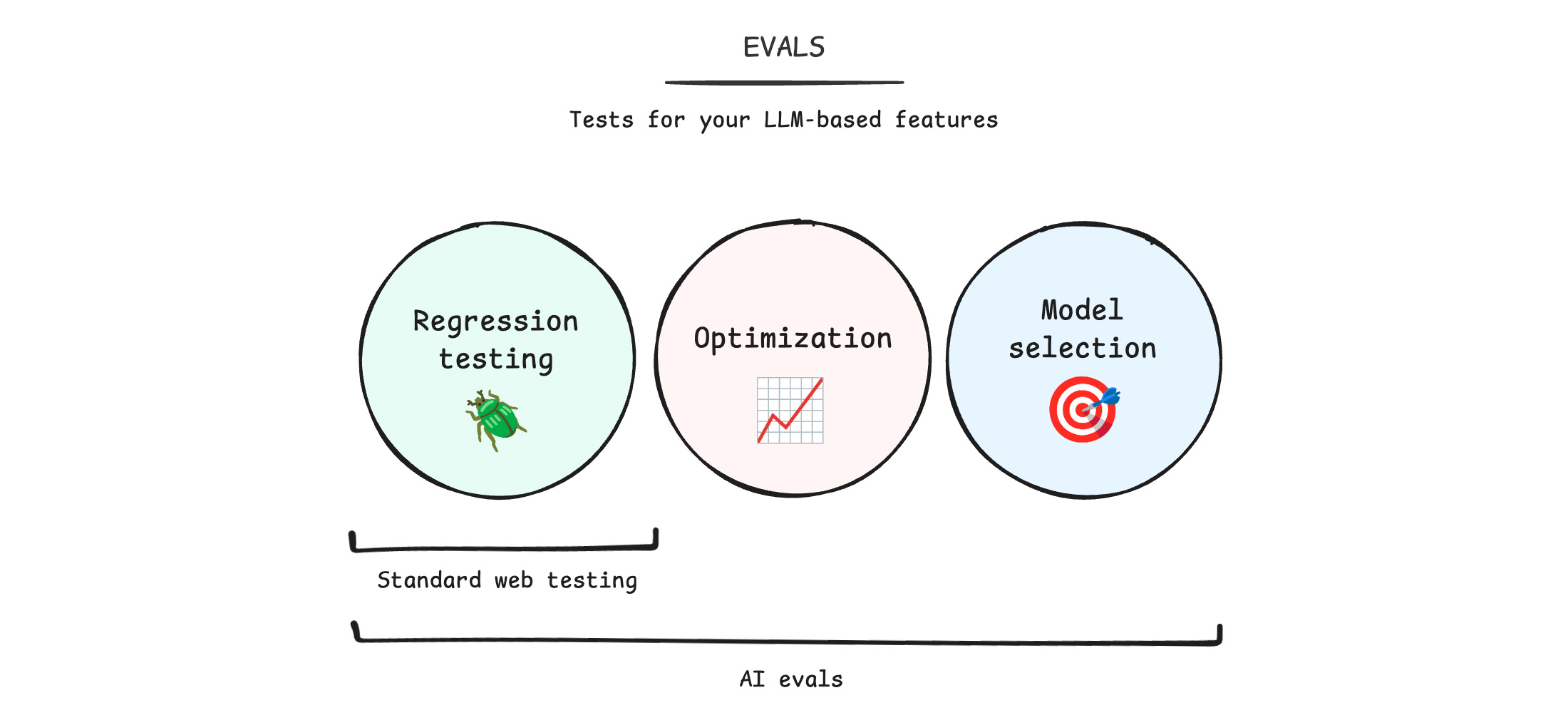

Standard web testing versus AI evals

We assume that you, a reader of this course, already knows how to test a website and web application. When adding AI, you need to change your existing mental model. Use AI evaluation to take the following actions:

- Perform regression testing: When you change your prompt or model, did the application break? Are you getting broken color palettes or toxic mottos? Unlike a web app where a break is software functionality, here you're checking if the LLM output is high-quality and safe. This involves subjectivity.

- Optimize your application: Is your application getting better? Are you improving the metrics you want, for example are you getting more on-brand mottos without increasing toxicity?

- Pick the right model: Is there a better model for your use case? Before AI, you pick your web stack once. With AI, you should regularly benchmark models identify opportunities to switch to a better (and potentially cheaper) model.

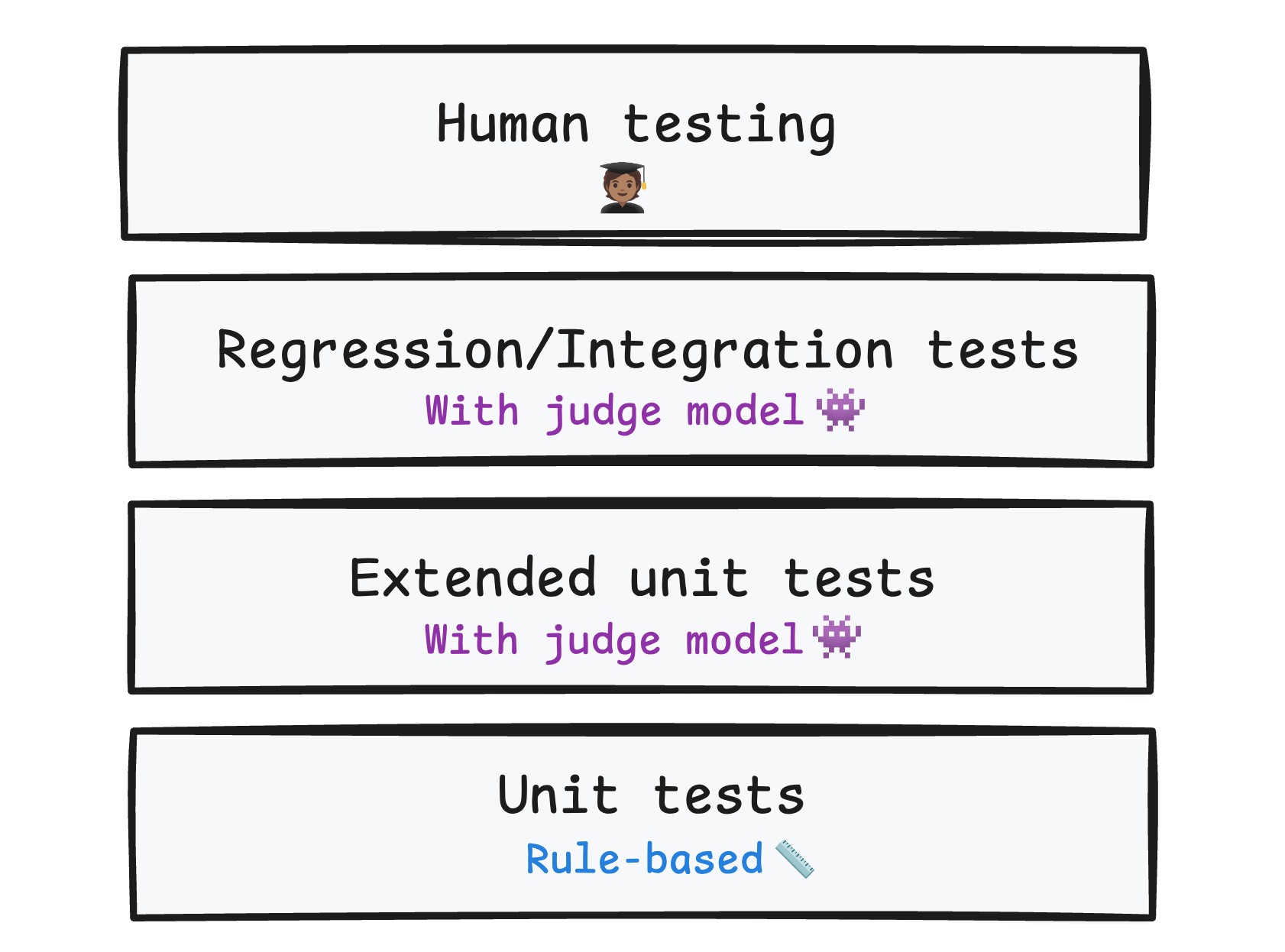

Layer your tests

A healthy codebase should have multiple layers of tests: unit tests, regression and integration tests, and end-to-end tests. Your evals should also be layered.

- Use rule-based evals combined with LLM-as-a-judge evals to fully automate tests for your AI application. This way, you can catch issues in daily development and CI/CD, and test that your release candidates meet your quality bar.

- Run integration and regression tests to verify quality at scale.

- Run manual human evaluations as an acceptance test.

On top of these evals you run at build time, monitor production traffic with run time evals. These can help you spot quality or safety issues on real-world inputs.

Keep evolving your evals

Evals should grow alongside your application. As your models get smarter, update your old evals.

Regularly add tricky examples to your test datasets, such as new edge cases or surprising user inputs you find in production.

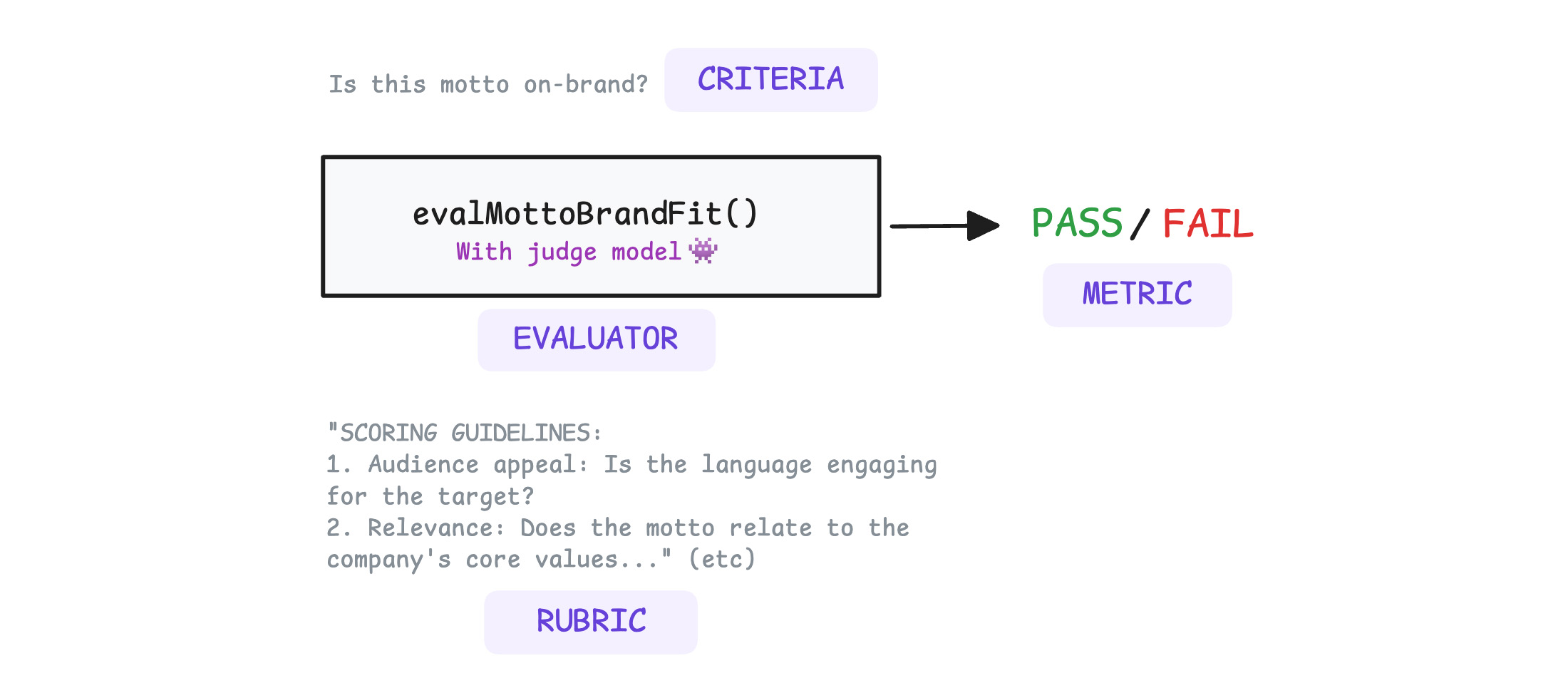

What do your evals measure?

Before designing evals, you should understand how to evaluate an output. There's a few terms you need to know.

Criteria are the rules, the dimensions that need to be tested. For example, brand alignment, toxicity, and accessibility.

Each evaluation criterion is measured by a metric. A metric is a single, concrete score that measures the model output against the criterion. This score can be a binary or a value within a range that measures how far or close the output is to the evaluator's expectation.

It's possible to measure the same criterion with different types of metrics. For example, for brand alignment:

- "Is this motto on-brand?" The metric is

PASSorFAIL. - "On a scale from 1-5, how well does the motto align with the brand?" The metric is an integer between one and five.

An evaluator is the code or model that scores the criterion. Evaluators determine metrics.